The Claude Shannon’s nightmare.

There is various kind of remote sensing images: drone, aerial, satellite, multi-temporal, multi-spectral, … and each product has its advantages and weaknesses. What if we could synthesize a “super-resolution” sensor that could benefit from the advantages of all these image sources? For instance, what if we could fuse (1) high spatial resolution sensor (you know, the one that passes 1 time a year over your ROI), and (2) high temporal resolution sensor (…of which images can’t even let you identify where’s your house!) to derive one synthetic super sensor that has high spatial and high temporal resolution?

Today’s data-driven deep learning techniques establish state of the art results that would be crazy not to consider.

Super-resolution Generative Adversarial Networks

In their paper (“Photo-Realistic Single Image Super-Resolution Using a Generative Adversarial Network“, which is quite a good read), Ledig et al. propose an approach to enhance low resolution images. They use Generative Adversarial Networks (GAN) to upsample and enhance a single low resolution image, such as the result is looking as the target (high resolution) image. GANs consist in two networks: the generator and the discriminator. In one hand, the generator transforms the input image (the low-res one) into an output image (the synthesized high-res one). In the other hand, the discriminator inputs two images (one low-res image, and one high-res image), and produce a signal that expresses the probability that the second image (the high-res) is a real one. The objective of the discriminator is to detect “fake” (i.e. synthesized) hi-res images among real ones. Simultaneously, the objective of the generator is to produce good looking hi-res images that fool the discriminator, and that are also close to the real hi-res images.

From images to remote sensing images

Now suppose we have some high res remote sensing images (say Spot 6/7, with 1.5m pixel spacing) and some low res remote sensing images (like Sentinel-2, with 10m pixel spacing) and we want to enhance the low res images at the same resolution of the high res. We can train our SRGAN generator over some patches and (try to…) make Sentinel-2 images look like Spot 6/7 images !

First I have downloaded a Sentinel-2 image from the Theia data center. The image is a monthly synthesis of multiple Sentinel-2 surface reflectance scenes produced with MAJA software (see here for details). The image is cloudless, thank to the amazing work of the CNES and CESBIO guys. I used a pansharpened Spot 6/7 scene acquired in the same month as the Sentinel-2 image, in the framework of the GEOSUD project.

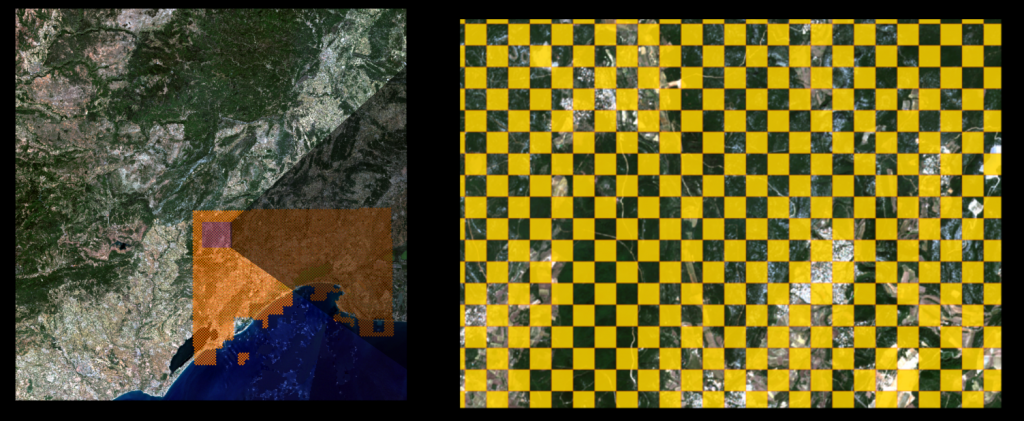

The tools of choice to perform this are the OrfeoToolbox with OTBTF (a remote module that uses TensorFlow). First, we extract some patches in images (using otbcli_PatchesExtraction).

Once this is done, we can train our generator over these patches, using some SRGAN-inspired code (since we can find many SRGAN implementations on github!). After the model is trained properly, we can transform our Sentinel-2 scenes into images with 1.5m pixel spacing using the otbcli_TensorflowModelServe application (first time that I had to set a outputs.spcscale parameter lower than 1.0!). The synthesized high resolution Sentinel-2 image has a size of 72k by 72k.

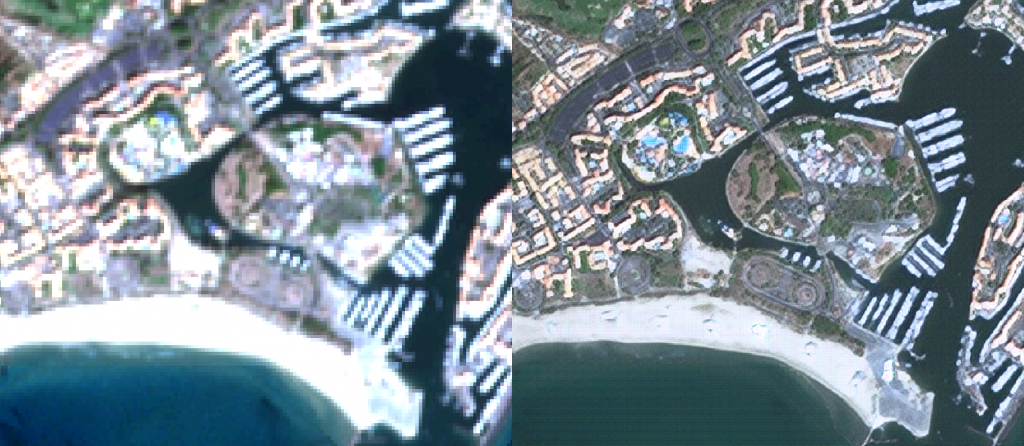

Below are a couple of illustrations. Left: the original RGB Sentinel-2 image, Right: the enhanced RGB Sentinel-2 image. In a sake of sincerity, we put images that are far away from learning patches. You can enlarge them to full resolution.

Full map

We put the entire generated image in a cartographic server. You can browse the map freely (Select the “S2_RGB (High-Res)” from the layer menu, to display the 1.5m Sentinel-2 image). You can also display patches used for training (“Training patches footprint” layer).

Conclusion

In this post we show what deep learning could offer in therm of data driven image enhancement for remote sensing images, at least for illustrative use.

Code

The code is available on Github. Feel free to open a PR to add your own model, or training loss, etc. !